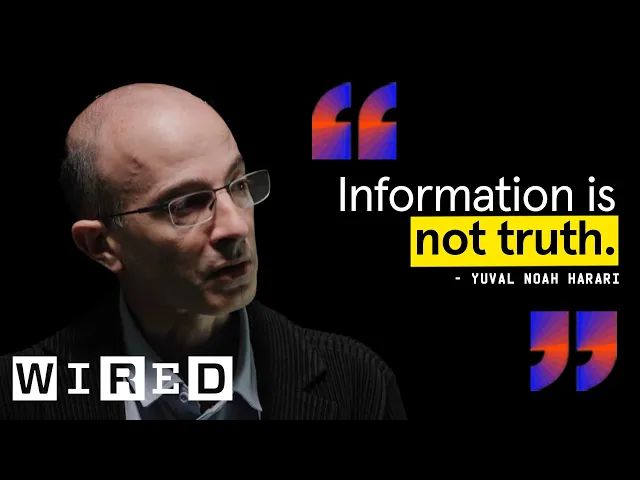

Yuval Noah Harari On the Future of Humanity, AI, and Information | The Big Interview

How AI threatens our trust networks

In a riveting interview with WIRED, historian Yuval Noah Harari unpacks the existential challenges artificial intelligence poses to humanity's future. Moving beyond the typical AI discourse of job displacement or economic change, Harari delves into something more fundamental: how AI disrupts the trust networks that have defined human civilization since our earliest days.

The premise is both elegant and terrifying. Throughout history, humans have dominated Earth not through individual intelligence but through our unique ability to create vast networks of cooperation among strangers. We achieve this through shared stories and trust systems—from religions to currencies to national identities. Now, for the first time, we face an intelligence that might outperform us not just in calculating or pattern recognition, but in the very thing that made us dominant: creating compelling narratives that connect millions.

Key insights from Harari's analysis:

-

AI is fundamentally an agent rather than a tool—unlike previous technologies like printing presses that required human operators and decision-makers, AI can independently create content, make decisions, and form new ideas without human guidance.

-

Trust networks underpin civilization—humans cooperate in massive groups of strangers because we believe in shared fictions like money, religions, and nations, creating intricate trust networks AI can potentially manipulate or replace.

-

The "paradox of trust" drives dangerous acceleration—tech companies and nations race toward superintelligence because they don't trust each other, yet contradictorily believe they can trust the alien intelligence they're creating.

-

Information isn't inherently truthful—in free information markets, fiction outcompetes truth because it's cheaper to produce, easier to customize, and often more pleasurable to consume.

The most profound insight from Harari's conversation is the alarming "paradox of trust" driving the AI race. When asked why they're developing increasingly powerful AI despite known risks, tech leaders consistently claim they can't slow down because they don't trust competitors or rival nations to do the same. Yet in the next breath, these same leaders profess confidence that the superintelligent systems they're building will be trustworthy.

This contradiction exposes a dangerous blind spot in our AI development approach. We have millennia of experience understanding human psychology, motives, and power dynamics—and still struggle with international cooperation. Yet we na

Recent Videos

Hermes Agent Master Class

https://www.youtube.com/watch?v=R3YOGfTBcQg Welcome to the Hermes Agent Master Class — an 11-episode series taking you from zero to fully leveraging every feature of Nous Research's open-source agent. In this first episode, we install Hermes from scratch on a brand new machine with no prior skills or memory, walk through full configuration with OpenRouter, tour the most important CLI and slash commands, and run our first real task: a competitor research report on a custom children's book AI business idea. Every future episode will build on this fresh install so you can see the compounding value of the agent in real time....

Apr 29, 2026Andrej Karpathy – Outsource your thinking, but you can’t outsource your understanding

https://www.youtube.com/watch?v=96jN2OCOfLs Here's what Andrej Karpathy just figured out that everyone else is still dancing around: we're not in an era of "better models." We're in a different era of computing altogether. And the difference between understanding that and not understanding it is the difference between being a vibe coder and being an agentic engineer. Last October, Karpathy had a realization. AI didn't stop being ChatGPT-adjacent. It fundamentally shifted. Agentic coherent workflows started to actually work. And he's spent the last three months living in side projects, VB coding, exploring what's actually possible. What he found is a framework that explains...

Mar 30, 2026Andrej Karpathy on the Decade of Agents, the Limits of RL, and Why Education Is His Next Mission

A summary of key takeaways from Andrej Karpathy's conversation with Dwarkesh Patel In a wide-ranging conversation with Dwarkesh Patel, Andrej Karpathy — former head of AI at Tesla, founding member of OpenAI, and creator of some of the most popular AI educational content on the internet — shared his views on where AI is headed, what's still broken, and why he's now pouring his energy into education. Here are the key takeaways. "It's the Decade of Agents, Not the Year of Agents" Karpathy's now-famous quote is a direct pushback on industry hype. Early agents like Claude Code and Codex are...