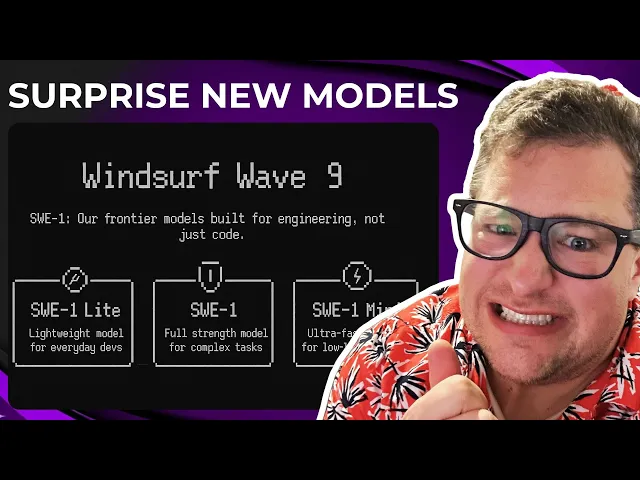

Windsurf’s new AI Models are … interesting

Windsoul AI overturns the competition landscape

In the rapidly evolving artificial intelligence landscape, new models emerge with surprising capabilities that challenge our assumptions about what's possible. The recent introduction of Windsurf's new AI models represents such a moment, potentially reshaping competitive dynamics in a field dominated by familiar names like OpenAI and Anthropic. This development could signal a significant shift in how we evaluate and deploy AI systems in real-world applications.

Key insights from Windsurf's breakthrough

-

Windsurf's new SWE-1 model demonstrates exceptional performance on programming tasks while using substantially fewer parameters than competing models, suggesting more efficient architectural design

-

The model exhibits impressive contextual understanding, producing not just working code but coherent natural language explanations that reveal substantial reasoning capabilities

-

While other models may still lead in some metrics, Windsurf's approach signals a potential paradigm shift where parameter count becomes less important than architectural innovation

The most fascinating aspect of Windsurf's achievement lies in what it reveals about the AI development landscape. Unlike established players with massive resources, Windsurf appears to have taken a fundamentally different approach to model architecture rather than simply scaling existing techniques with more computing power. This suggests we may be entering a new phase of AI development where cleverness trumps brute force.

This matters enormously for the industry as a whole. If smaller, more efficient models can achieve comparable results to massive systems, it democratizes access to advanced AI capabilities. Companies without the resources of OpenAI or Google could potentially develop competitive offerings, broadening the marketplace and accelerating innovation through diverse approaches rather than pure computing scale.

What's particularly noteworthy about this development is how it challenges the "bigger is better" narrative that has dominated AI research in recent years. Since GPT-3 demonstrated the power of scale, the focus has been on increasing parameter counts and training data, with each new model boasting ever-larger numbers. Windsurf's approach suggests there might be significant inefficiencies in these massive models that clever engineering can eliminate.

Consider DeepMind's recent research on "sparse models" that activate only a portion of their parameters for any given task. This work supports the idea that much of a large model's capacity may be redundant for specific applications. Windsurf may have found a way to specifically optimize for

Recent Videos

Hermes Agent Master Class

https://www.youtube.com/watch?v=R3YOGfTBcQg Welcome to the Hermes Agent Master Class — an 11-episode series taking you from zero to fully leveraging every feature of Nous Research's open-source agent. In this first episode, we install Hermes from scratch on a brand new machine with no prior skills or memory, walk through full configuration with OpenRouter, tour the most important CLI and slash commands, and run our first real task: a competitor research report on a custom children's book AI business idea. Every future episode will build on this fresh install so you can see the compounding value of the agent in real time....

Apr 29, 2026Andrej Karpathy – Outsource your thinking, but you can’t outsource your understanding

https://www.youtube.com/watch?v=96jN2OCOfLs Here's what Andrej Karpathy just figured out that everyone else is still dancing around: we're not in an era of "better models." We're in a different era of computing altogether. And the difference between understanding that and not understanding it is the difference between being a vibe coder and being an agentic engineer. Last October, Karpathy had a realization. AI didn't stop being ChatGPT-adjacent. It fundamentally shifted. Agentic coherent workflows started to actually work. And he's spent the last three months living in side projects, VB coding, exploring what's actually possible. What he found is a framework that explains...

Mar 30, 2026Andrej Karpathy on the Decade of Agents, the Limits of RL, and Why Education Is His Next Mission

A summary of key takeaways from Andrej Karpathy's conversation with Dwarkesh Patel In a wide-ranging conversation with Dwarkesh Patel, Andrej Karpathy — former head of AI at Tesla, founding member of OpenAI, and creator of some of the most popular AI educational content on the internet — shared his views on where AI is headed, what's still broken, and why he's now pouring his energy into education. Here are the key takeaways. "It's the Decade of Agents, Not the Year of Agents" Karpathy's now-famous quote is a direct pushback on industry hype. Early agents like Claude Code and Codex are...