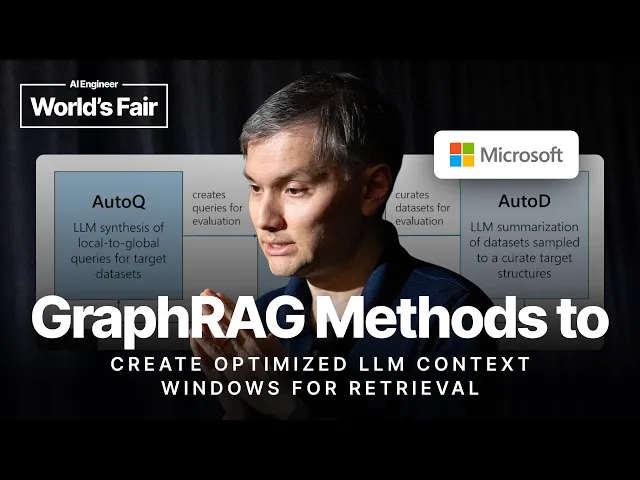

GraphRAG methods to create optimized LLM context windows for Retrieval

GraphRAG unlocks smarter context management for LLMs

In the increasingly complex world of large language models, context management is emerging as a critical battleground for performance optimization. During a recent technical presentation, Microsoft's Jonathan Larson walked through GraphRAG – a sophisticated approach that's transforming how developers can structure information for more effective retrieval and reasoning in LLM applications. This technique addresses fundamental limitations in traditional RAG (Retrieval-Augmented Generation) implementations that have plagued enterprise deployments since these models began scaling into production environments.

GraphRAG represents a significant evolution in LLM technology by treating context as a structured graph rather than flat sequences of text. The approach specifically targets what Larson calls the "context barrier" – the fundamental limitation where LLMs struggle with information that exceeds their context window or requires complex relationships to be understood properly.

Key insights from Larson's presentation

-

Traditional RAG systems fall short when handling complex relationships between documents or when needing to incorporate metadata – GraphRAG addresses this by creating graph-based context structures that preserve relationships.

-

The context window is a critical bottleneck in LLM performance – current models can only process a limited amount of information at once, making intelligent context management essential.

-

By representing knowledge as a graph, developers can more effectively prioritize what information gets included in the prompt, leading to better semantic relevance and reasoning capabilities.

-

GraphRAG isn't just a theoretical approach – Microsoft has implemented it through their autogen framework, demonstrating tangible performance improvements across various tasks.

-

The method involves creating a knowledge graph where entities and their relationships are explicitly modeled, allowing for more targeted retrieval based on the specific query needs.

Why this matters more than you might think

The most compelling insight from Larson's presentation is how GraphRAG fundamentally changes our approach to context management. Rather than treating retrieval as simply finding relevant documents, GraphRAG recognizes that the relationships between pieces of information are often as important as the information itself. This shift in thinking enables LLMs to better handle complex reasoning tasks where connections between facts matter significantly.

This innovation comes at a crucial moment in the AI industry's evolution. As organizations push to deploy LLMs in increasingly sophisticated business workflows, the limitations of traditional RAG approaches have become painfully apparent. Enterprise users frequently encounter scenarios

Recent Videos

Hermes Agent Master Class

https://www.youtube.com/watch?v=R3YOGfTBcQg Welcome to the Hermes Agent Master Class — an 11-episode series taking you from zero to fully leveraging every feature of Nous Research's open-source agent. In this first episode, we install Hermes from scratch on a brand new machine with no prior skills or memory, walk through full configuration with OpenRouter, tour the most important CLI and slash commands, and run our first real task: a competitor research report on a custom children's book AI business idea. Every future episode will build on this fresh install so you can see the compounding value of the agent in real time....

Apr 29, 2026Andrej Karpathy – Outsource your thinking, but you can’t outsource your understanding

https://www.youtube.com/watch?v=96jN2OCOfLs Here's what Andrej Karpathy just figured out that everyone else is still dancing around: we're not in an era of "better models." We're in a different era of computing altogether. And the difference between understanding that and not understanding it is the difference between being a vibe coder and being an agentic engineer. Last October, Karpathy had a realization. AI didn't stop being ChatGPT-adjacent. It fundamentally shifted. Agentic coherent workflows started to actually work. And he's spent the last three months living in side projects, VB coding, exploring what's actually possible. What he found is a framework that explains...

Mar 30, 2026Andrej Karpathy on the Decade of Agents, the Limits of RL, and Why Education Is His Next Mission

A summary of key takeaways from Andrej Karpathy's conversation with Dwarkesh Patel In a wide-ranging conversation with Dwarkesh Patel, Andrej Karpathy — former head of AI at Tesla, founding member of OpenAI, and creator of some of the most popular AI educational content on the internet — shared his views on where AI is headed, what's still broken, and why he's now pouring his energy into education. Here are the key takeaways. "It's the Decade of Agents, Not the Year of Agents" Karpathy's now-famous quote is a direct pushback on industry hype. Early agents like Claude Code and Codex are...