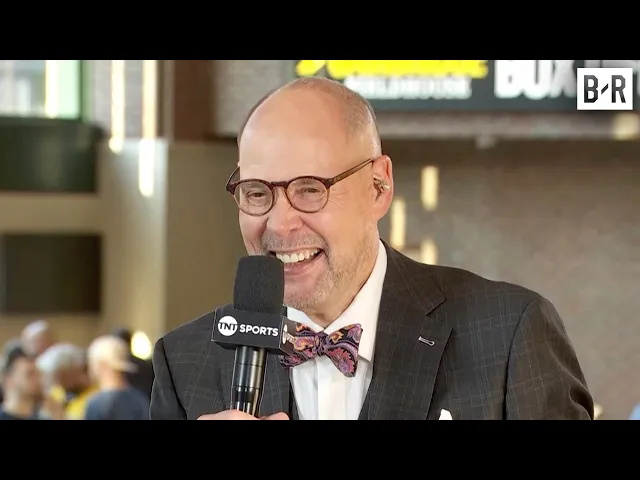

Google AI Result Says Ernie Johnson is Black 😂

AI mishaps reveal digital bias problem

In the world of sports commentary, few shows carry the cultural weight and viewer loyalty of TNT's "Inside the NBA." A recent segment from the show has gone viral, highlighting a significant issue with artificial intelligence that business leaders should take note of. When the crew discovered that Google's AI system incorrectly identified host Ernie Johnson as Black, their humorous reaction sparked broader conversations about algorithmic bias and the current limitations of AI systems that organizations are rapidly adopting.

Key insights from the incident:

-

AI systems still struggle with basic identification tasks – Despite billions in development, Google's AI incorrectly categorized Ernie Johnson's race, revealing fundamental flaws in how these systems interpret and categorize human characteristics.

-

Public visibility of AI failures is increasing – The lighthearted response from the Inside the NBA crew (particularly Shaquille O'Neal and Charles Barkley) demonstrates how AI mistakes are increasingly becoming public conversation topics rather than hidden technical issues.

-

Algorithmic bias remains a persistent challenge – Even major platforms like Google continue to produce biased or incorrect results when handling race, gender, and other human characteristics, despite years of work on the problem.

Why this matters beyond comedy

The most revealing aspect of this incident isn't just the humorous reaction but what it tells us about AI readiness for business applications. When a platform as sophisticated and well-funded as Google's AI can make such a fundamental error about something as basic as a public figure's racial identity, it raises critical questions about AI reliability in more complex business contexts.

This matters tremendously for organizations across sectors. As businesses increasingly deploy AI for everything from customer service to hiring decisions, the possibility of embedded bias or simple factual errors poses significant risks. A hiring AI that misidentifies candidate characteristics could create legal exposure. A customer service AI that makes similarly flawed assumptions might damage relationships with key market segments.

The stakes extend beyond just embarrassment or viral moments. Studies from MIT and Stanford have consistently shown that even the most advanced vision and language AI systems exhibit measurable biases in how they process human characteristics including race, gender, and age. The business implications range from regulatory concerns to reputation damage and lost opportunities.

Beyond what the video showed

What makes this challenge particularly difficult is that bias often appears in unexpected places. Consider Microsoft's

Recent Videos

Hermes Agent Master Class

https://www.youtube.com/watch?v=R3YOGfTBcQg Welcome to the Hermes Agent Master Class — an 11-episode series taking you from zero to fully leveraging every feature of Nous Research's open-source agent. In this first episode, we install Hermes from scratch on a brand new machine with no prior skills or memory, walk through full configuration with OpenRouter, tour the most important CLI and slash commands, and run our first real task: a competitor research report on a custom children's book AI business idea. Every future episode will build on this fresh install so you can see the compounding value of the agent in real time....

Apr 29, 2026Andrej Karpathy – Outsource your thinking, but you can’t outsource your understanding

https://www.youtube.com/watch?v=96jN2OCOfLs Here's what Andrej Karpathy just figured out that everyone else is still dancing around: we're not in an era of "better models." We're in a different era of computing altogether. And the difference between understanding that and not understanding it is the difference between being a vibe coder and being an agentic engineer. Last October, Karpathy had a realization. AI didn't stop being ChatGPT-adjacent. It fundamentally shifted. Agentic coherent workflows started to actually work. And he's spent the last three months living in side projects, VB coding, exploring what's actually possible. What he found is a framework that explains...

Mar 30, 2026Andrej Karpathy on the Decade of Agents, the Limits of RL, and Why Education Is His Next Mission

A summary of key takeaways from Andrej Karpathy's conversation with Dwarkesh Patel In a wide-ranging conversation with Dwarkesh Patel, Andrej Karpathy — former head of AI at Tesla, founding member of OpenAI, and creator of some of the most popular AI educational content on the internet — shared his views on where AI is headed, what's still broken, and why he's now pouring his energy into education. Here are the key takeaways. "It's the Decade of Agents, Not the Year of Agents" Karpathy's now-famous quote is a direct pushback on industry hype. Early agents like Claude Code and Codex are...